Every business has

The operational drag

Processes, tools, and technology compound quietly until growth starts feeling heavier than it should.

You know what needs to change. We partner with you to see it.

One Company.

Three ways in.

From idea

to shipped.

When something doesn't exist and it should, we build it. Custom software, internal tools, and client-facing platforms designed around how your operation actually works.

- Custom Application Development

- Internal Tooling & Dashboards

- Client-Facing Platforms

- API Design & Integration

- Mobile Applications

From stagnant

to performing.

Most operational inefficiency is hiding in plain sight. We map the process, find what's costing you, and redesign it, anything from workflow automation to full operations overhauls.

- Process Analysis & Redesign

- Operations Consulting

- Workflow Automation

- System Integration

- Change Management

From performing

to leading.

Strategy, governance, and intelligent systems for businesses ready to move beyond incremental gains. We advise where AI earns its place, then build the infrastructure to put it there.

- Business Strategy & Roadmapping

- AI Strategy & Implementation

- Data Architecture

- Compliance & Governance

- Executive Advisory

One Company.

Three ways in.

From idea to shipped.

When something doesn't exist and it should, we build it. Custom software, internal tools, and client-facing platforms designed around how your operation actually works.

- Custom Application Development

- Internal Tooling & Dashboards

- Client-Facing Platforms

- API Design & Integration

- Mobile Applications

From stagnant to performing.

Most operational inefficiency is hiding in plain sight. We map the process, find what's costing you, and redesign it, anything from workflow automation to full operations overhauls.

- Process Analysis & Redesign

- Operations Consulting

- Workflow Automation

- System Integration

- Change Management

From performing to leading.

Strategy, governance, and intelligent systems for businesses ready to move beyond incremental gains. We advise where AI earns its place, then build the infrastructure to put it there.

- Business Strategy & Roadmapping

- AI Strategy & Implementation

- Data Architecture

- Compliance & Governance

- Executive Advisory

We don't just advise.

We ship.

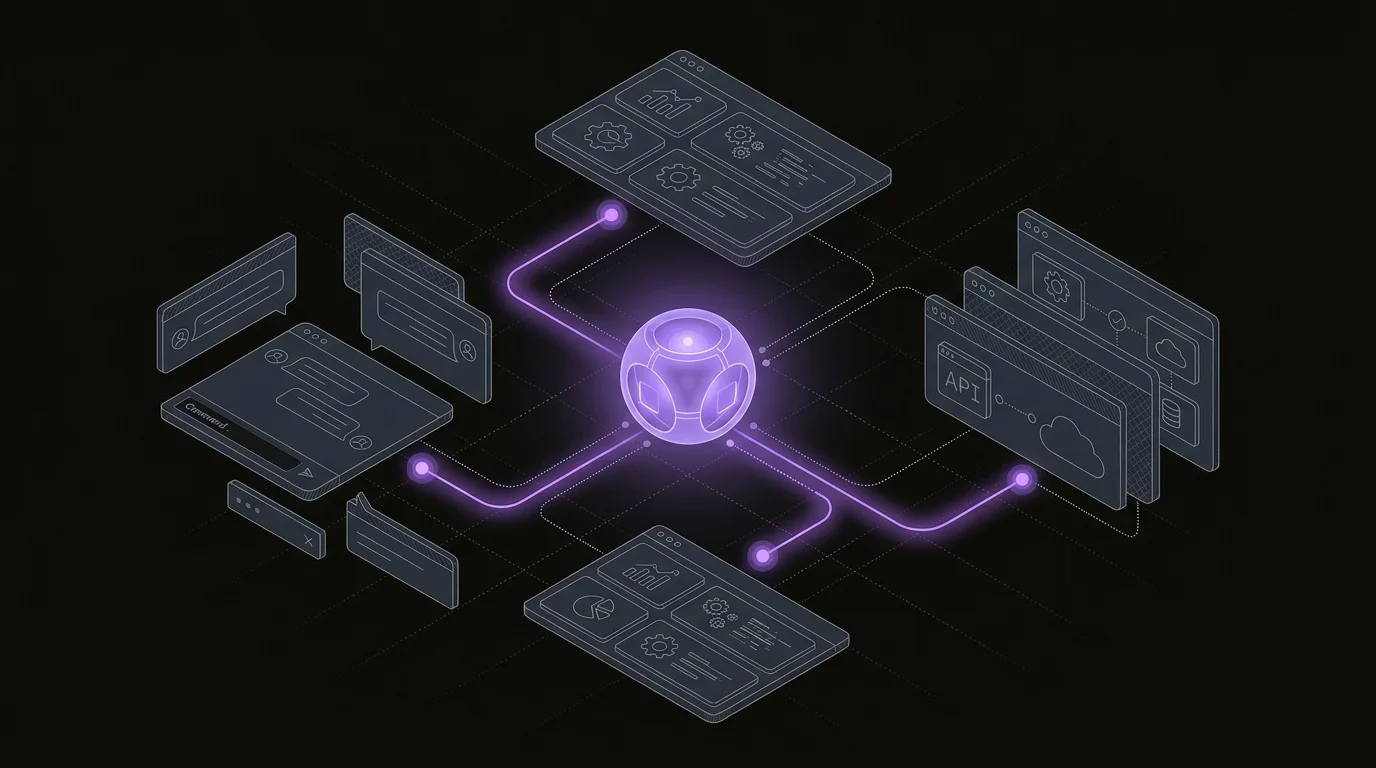

Agent Studio

A familiar chat interface with serious power behind it. Agent Studio connects to any model and any tool in your stack, takes actions on its own, and works hand-in-hand with knowledge bases like Cerebro, so any team can put capable AI agents to work.

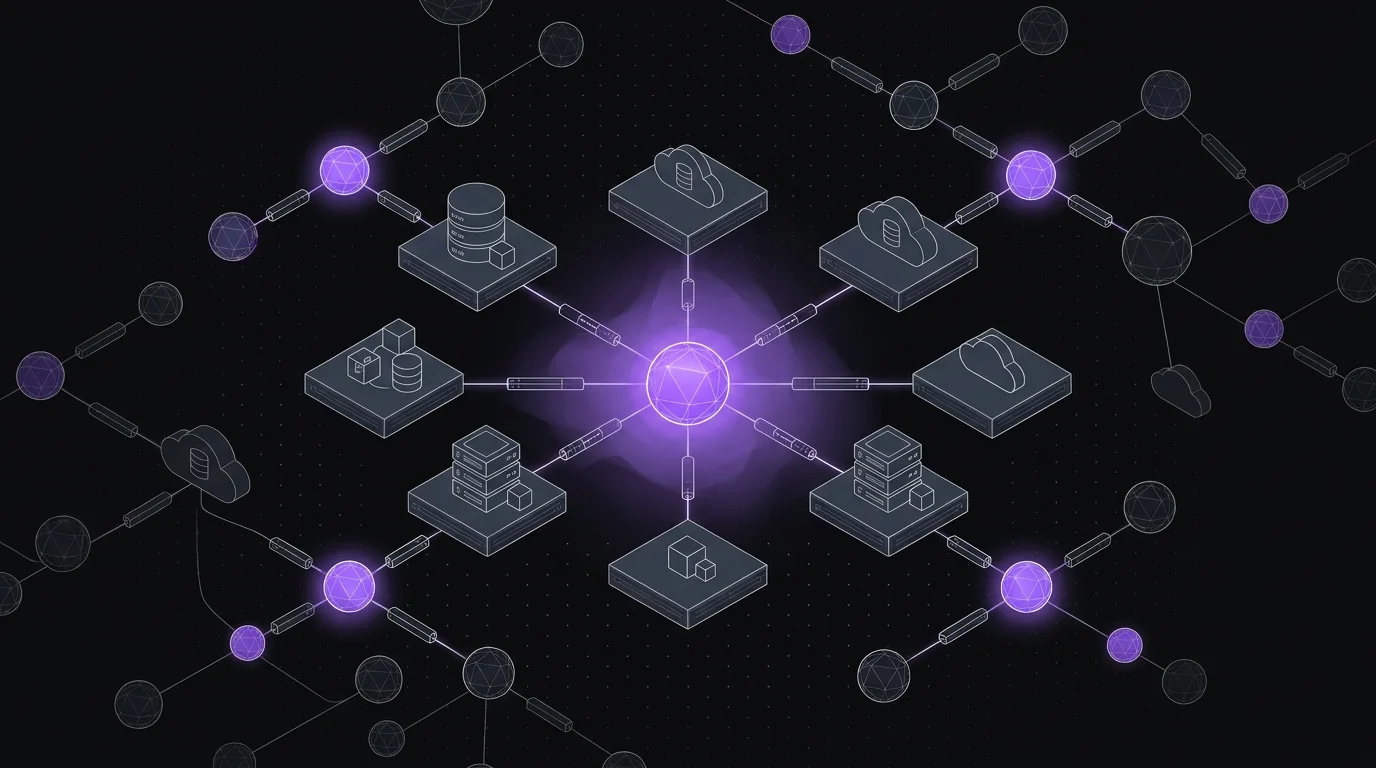

Cerebro

Your AI's missing memory. Cerebro surfaces the relationships buried in your unstructured data, then we train custom models and tune rules engines around how your business actually works, so your agents understand you, not just the internet.

From discovery to lasting support.

A proven path that keeps your systems healthy and evolving as your business grows.

Discover

We map the problem, not the solution. Before anything is built, we understand how your business actually operates.

Design

Architecture, strategy, or process blueprint. We build the plan before we touch a line of code.

Deliver

Build it, fix it, or deploy it. We execute against the plan with precision and report progress clearly.

Embed

Handover, training, and ongoing partnership. We don’t disappear at launch; we make sure it sticks.

Support

Monitoring, iteration, and a direct line when you need it. We keep systems healthy as your business grows.

Hey, look at your demo.

Drop in your email and we’ll book a focused walkthrough around the systems, workflows, and AI opportunities inside your business.

No generic pitch. We’ll use the call to map where automation and custom software can actually move the needle.